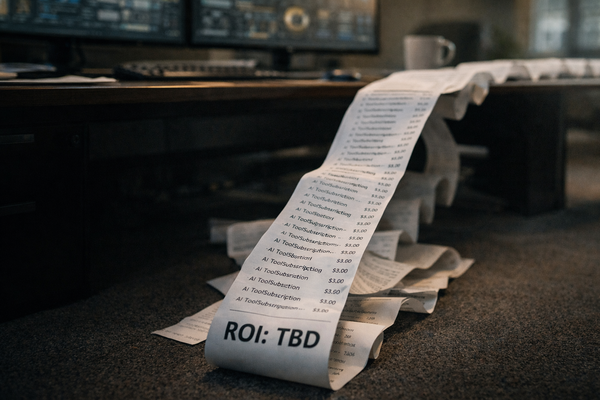

AI budgets are growing. Accountability for those budgets is not.

Most marketing leaders can tell you exactly what they spent on AI tools last quarter. Very few can tell you what it returned. That disconnect is a measurement problem, and it’s one of the most expensive blind spots in business today.

The pressure to adopt AI is real. Competitors are moving, vendors are pitching, and boards are asking questions. So budgets get approved, tools get purchased, and teams get busy. But busy is not the same as effective, and activity is not the same as ROI.

Without a model to measure what AI produces, you’re essentially running experiments with no control group and no scoreboard. Some of those experiments will pay off, many will not. And because no one is tracking outcomes in any structured way, you’ll keep funding both the winners and the losers with equal enthusiasm.

That’s the situation a significant number of marketing organizations find themselves in right now, because no one handed them a framework for measuring something this new.

AI Spending Without Measurement Is a Losing Bet

There’s a version of AI adoption that feels like progress but functions like waste and looks like a growing stack of subscriptions:

A team that’s "using AI"

A handful of wins that get mentioned in all-hands meetings

And a finance team that keeps asking the same question nobody can fully answer: what is this worth?

The uncomfortable truth is that most organizations adopted AI tools the same way they adopted social media a decade ago–fast, reactive, and without a measurement system in place. The difference is that social media mistakes were relatively cheap. AI budgets are not.

According to a growing body of research on enterprise AI adoption, the majority of companies report difficulty quantifying the business impact of their AI investments. Tools get purchased at the team level, usage varies, and outcomes go untracked. And when budget season arrives, decisions about what to renew or expand get made on gut feel rather than data.

For marketing leaders specifically, this creates a compounding problem. Marketing is already a function that fights for measurement credibility. Adding AI to the mix without a clear ROI model doesn’t make that fight easier, it hands skeptics more ammunition.

The Real Cost of Flying Blind

When there’s no measurement framework in place, a few things happen reliably:

First, the tools that get the most internal visibility aren’t necessarily the ones producing the most value. They’re the ones with the loudest champions. A content writer who loves an AI drafting tool will talk about it. A process that quietly saves your ops team 6 hours a week will not make it into a slide deck unless someone is tracking it.

Second, underperforming tools stay in the stack. Without outcome data, there is no trigger to cancel, consolidate, or redirect spend. The average marketing tech stack already has more tools than any team uses effectively. AI adds another layer without the discipline to match.

Third, and most damaging: you lose the ability to scale what works. If you can’t identify which AI applications are generating real returns, you can’t double down on them with confidence. Every investment becomes a one-off experiment instead of a building block.

This Is a Solvable Problem

The good news is that an AI ROI problem isn’t a technology problem. You don’t need a data science team or a custom attribution platform to start measuring effectively. You need a model, a baseline, and the discipline to track outcomes consistently over time.

That starts with understanding what ROI looks like when AI is involved, because it doesn’t always show up where finance is looking for it. Revenue impact matters, but it’s rarely the only place value is being created or destroyed. Time, quality, and cost carry weight too, and most organizations aren’t capturing any of them in a systematic way.

The framework exists, most teams just haven’t been given it yet.

What an AI ROI Model Actually Looks Like

Most leaders hear "ROI model" and picture a spreadsheet with a clean formula at the bottom. Revenue in, cost out, percentage calculated. That model works well for a media buy. For AI, it falls apart fast, because AI does not create value in a single, traceable line. Value gets created across multiple dimensions simultaneously, and measuring only one of them means you’re almost certainly undercounting the rest.

A functional AI ROI model accounts for 4 value categories: time recovered, revenue influenced, cost reduced, and quality improved. Each one matters. Each requires its own evaluation approach, and none of them can substitute for the others.

Time Recovered

This is the most immediate form of value AI creates, and consistently the most undercounted. When a tool cuts a 3-hour task down to 45 minutes, that time goes somewhere. The question is whether it goes somewhere productive or quietly disappears into the day.

Time recovered only becomes ROI when it gets redirected toward work that produces measurable outcomes. A copywriter who gets three hours back from AI-assisted drafting needs to deploy those hours into strategy, client work, or higher-leverage creative. Without that intentional redirection, the time evaporates and the ROI disappears with it.

Tracking this requires two things: a baseline of how long key tasks took before AI, and a consistent record of where recovered time is going. Neither is complicated. Both are almost universally skipped.

Revenue Influenced

This category covers AI applications that touch the revenue side of the business: faster campaign execution, better personalization, improved lead scoring, and conversion copy that outperforms previous benchmarks. These are real, trackable outcomes, but they require attribution discipline to assess accurately.

The trap here is giving AI credit for outcomes it didn’t drive, or failing to give it credit for outcomes it did. Both distort your model.

Revenue influence should be tracked at the campaign or initiative level, with clear documentation of where AI was applied and what changed as a result. It doesn’t need to be a controlled trial. Consistency is what matters, maintained long enough that patterns emerge.

Cost Reduced

Finance understands this category best, which makes it the easiest to report and the easiest to over-index on. AI can reduce costs through headcount efficiency, vendor consolidation, faster production cycles, and reduced error rates. Those are legitimate returns.

The risk is building an AI strategy that optimizes entirely for cost reduction while ignoring the value categories that drive growth. A leaner operation that produces worse output is not a win. Cost reduction carries the most weight when it happens alongside improvement in at least one of the other three categories.

Quality Improved

This is the hardest category to measure and the one with the longest payoff window. When AI helps a team produce more consistent output, catch errors earlier, or make better decisions with better information, the value is real but diffuse. It surfaces in customer retention, brand perception, and reduced rework, none of which trace cleanly back to a single tool.

Quality improvements need to be tracked at the output level. That means establishing standards before AI is applied and measuring against those standards consistently afterward. Slow work, yes. Also the category most likely to produce durable competitive advantage.

Why All Four Categories Must Be Tracked Together

A model that only tracks one or two of these categories produces a misleading picture. An AI tool that looks expensive on cost grounds may be generating enormous time and quality returns that offset that cost several times over. One that looks like a revenue driver may be quietly degrading quality in ways that will not show up for months.

The full model isn’t complicated. It’s 4 columns, consistently maintained, with honest numbers in each one. That discipline, applied across your AI stack, gives you something most marketing organizations do not have: a clear, defensible picture of where AI is earning its place.

The Metrics Marketing Leaders Miss Most

Having a framework is one thing. Knowing where your current system has holes is another.

These are the blind spots that show up most consistently, and the ones most likely to be distorting your picture right now.

Attribution

Marketing has an attribution problem that predates AI by decades. Adding AI into the mix doesn't fix that problem. In most cases, it makes the problem harder to see because AI contributions are woven into the work rather than sitting beside it.

When a campaign performs well after AI-assisted audience segmentation, the segmentation rarely gets the credit. When a sales team closes faster because AI improved their outreach copy, the copy rarely shows up in the win report. Credit flows to the channel, the rep, or the campaign, while the AI layer that sharpened all of it goes unmeasured.

Closing this requires a different documentation habit. Before AI is applied to any initiative, note what the baseline looks like. After the initiative runs, compare outcomes to that baseline. Simple, unglamorous, and almost no one's doing it consistently.

Productivity

Time savings are the most frequently cited benefit of AI adoption. They're also the most frequently uncounted benefit, because most organizations don't have a system for capturing them.

A team member who saves 90 minutes a day using AI-assisted research is generating real value. Whether that value reaches the business depends entirely on what happens to those ninety minutes. Without a tracking system, there's no way to know. Time savings exist in anecdotes and offhand comments, never in a report that influences budget decisions.

Downstream Effects

Some of the strongest AI returns accumulate over time in places most dashboards aren't pointed at.

Faster content production means more tests run per quarter, which means faster learning cycles. Better AI-assisted briefs mean fewer revision rounds, which means lower agency costs and faster go-to-market. Improved data hygiene from AI-assisted list management means better deliverability, which means more revenue from email over time. None of these show up in a weekly performance report. All of them are real.

Capturing downstream effects requires connecting dots across systems that don't typically talk to each other. It's harder work than tracking a campaign click-through rate. The payoff is a much more complete picture of what your AI investment is producing.

Vanity Metrics Dressed Up as ROI

This one cuts in the opposite direction. Not every positive number is a meaningful return, and the AI space has produced more than its share of metrics that feel significant but carry little weight:

Prompts run per month

Features used

Time spent inside a tool

These numbers measure activity, not outcomes. A team that runs 500 prompts a month and produces mediocre work isn't generating ROI. A team that runs 50 prompts and consistently cuts production time in half is generating substantial ROI. Volume of usage isn't a proxy for value.

The discipline here is always asking the same question: what changed in the business because of this activity? If the answer's vague or unmeasurable, the metric doesn't belong in your ROI model.

Incomplete Measurement

Each of these blind spots carries its own cost. Together, they create a picture so incomplete that it actively undermines good decision-making.

Budget goes to the tools with the loudest advocates rather than the strongest returns. Investments that are quietly working get cut because the evidence for them is invisible. Investments that feel good but produce little keep getting renewed because nobody's built the case to cut them.

Fixing your measurement model doesn't require a platform overhaul or a data science hire. It requires identifying which blind spots apply to your organization and putting simple tracking systems in place before the next budget cycle forces the conversation.

How to Build Your AI ROI Baseline Before You Invest More

Before you approve another AI tool, renew an existing subscription, or expand usage across your team, you need a baseline. Without one, you're measuring progress against nothing, and progress against nothing isn't a measurement at all.

Building a baseline doesn't require a consultant or a six-week audit. It requires honesty about where you are right now and a simple system for capturing what changes from here.

Start With What You're Already Spending

Pull every AI-related line item from your current budget. Subscriptions, seat licenses, usage-based tools, any AI features bundled inside platforms you're already paying for. Most marketing leaders, when they do this exercise for the first time, find the number is larger than they expected and the overlap is wider than they realized.

For each tool, document 3 things: what it's supposed to do, who's using it, and what evidence exists that it's producing value. If the third column is empty or filled with anecdotes, that's your starting point.

Define What "Working" Looks Like for Each Application

This step gets skipped more than any other, and it's the one that makes everything else possible. For every AI application in your stack, there needs to be a specific, measurable definition of success before outcomes can be tracked against it.

That definition doesn't need to be elaborate. For an AI writing tool, it might be average time to first draft, revision rounds per piece, or content output per team member per week. For an AI-assisted email platform, it might be deliverability rate, open rate trajectory, or revenue per send. The specific metrics matter less than the commitment to track them consistently.

Without this definition in place, every conversation about whether a tool is working devolves into opinion. With it, the data makes the argument for you.

Establish Your Pre-AI Benchmarks

For any application where outcomes haven’t been tracked, you need a short period to establish a baseline before AI is fully deployed or the next renewal decision arrives.

2-4 weeks of consistent tracking is usually enough to establish a usable benchmark. Document task completion times, output volumes, error rates, revision cycles, whatever metrics align with the success definition you set in the previous step. These numbers don't need to be perfect; just honest and consistent enough to serve as a comparison point later.

If a tool is already deployed and no baseline exists, don't let that stop you. Start tracking now. In 90 days you'll have a baseline. In 6 months you'll have a trend. That's more than most organizations have, and it's enough to make real decisions with.

Build a Simple Tracking Rhythm

A baseline is only useful if something gets measured against it.

A weekly team check-in that includes three questions covers most of what you need: what AI-assisted work happened this week, how long it took compared to the previous approach, and what was the outcome? Recorded consistently in a shared document, those answers accumulate into something meaningful faster than most leaders expect.

Monthly, roll those inputs into a simple scorecard across your 4 value categories: time recovered, revenue influenced, cost reduced, quality improved. One number per category per tool. Reviewed once a month, updated honestly, shared with whoever controls the budget.

That cadence, maintained for a single quarter, produces more actionable insight than most organizations have generated in their entire AI adoption period.

Know When to Cut and When to Scale

A baseline exists to inform decisions, and the two most important decisions in any AI stack are where to invest more and where to stop investing entirely.

A tool that scores consistently across multiple value categories deserves more budget, broader adoption, and deeper integration into your workflows. A tool that produces strong activity metrics but weak outcome metrics deserves a hard conversation. One that can't demonstrate value in any of the four categories after a fair evaluation period doesn't deserve a renewal.

This is the same standard you'd apply to any other marketing investment, and applying it to AI is what separates organizations that are building a real capability from those that are just accumulating subscriptions.

Knowing what’s working is a skill, and like any skill, it develops faster with the right training behind it. The leaders who are getting the most out of their AI investments are operating with a clearer strategic framework for how AI fits into their business, how to evaluate it honestly, and how to scale what works.

That's exactly what our AI training programs are built to develop. Whether you're a marketing professional looking to sharpen your own AI strategy or a business leader trying to bring your entire team up to speed, we have a path designed for where you are right now. Start with the AI SkillsBuilder® course if you're building foundational capability, or move directly into AI Mastery for Business Leaders if you're ready to operate at the strategic level.