The Great Disconnect

You read the reports. You see the headlines. According to the major research firms, AI adoption is happening everywhere. We are told that usage is now mainstream. Reports from Wharton and McKinsey claim that 82% of leaders use Generative AI weekly.[1] They tell us that nearly nine out of ten respondents say their organizations use AI regularly.[2] If you believe the data, the revolution is already won.

But when you look at the bottom line, a different story emerges. While usage is supposedly high, actual value is remarkably low. The MIT NANDA initiative reports that 95% of generative AI initiatives stall without delivering any measurable impact on the Profit and Loss statement.[3] Nearly two-thirds of organizations remain stuck in experimentation.[2]

This creates a dangerous disconnect. We call it the AI Adoption Mirage. From a distance, the activity looks like progress. You see high login rates and enthusiastic surveys. But when you get closer, you realize the water isn't real. The activity is not translating into business value.

The problem is not the technology. The problem is how we define success. We are measuring frequency when we should be measuring proficiency. We are tracking how often people click a button, but we are failing to track if they know what they are doing. This white paper proposes a new standard for calibrating enterprise AI transformation. We must move beyond simple usage metrics to a model based on synchronized maturity across governance, platform architecture, and human capabilities. In other words, security, strategy, and skills.

The Big Miss on Metrics

The current definition of "adoption" is flawed. When research firms like Wharton and GBK Collective measure adoption, they focus on usage frequency. They ask leaders if they use AI daily or weekly. They track desktop tasks like summarizing meetings, editing documents, or writing emails.[1]

This creates a mirage of internal expertise. It counts a novice asking ChatGPT to write a poem the same way it counts an AI architect designing a complex workflow. This masks the problem for the C-suite and provides them with a completely inaccurate picture of AI adoption.

High frequency does not equal high skill. In fact, despite reports of high usage, we find the opposite is true if we simply add proficiency to the definition.

We must distinguish between two very different types of AI use.

The first is Individual Augmentation. This is where most organizations sit today. It involves using tools like ChatGPT or Copilot for repeatable office tasks. It is personal, isolated, and rarely impacts the enterprise P&L significantly. This is what the surveys are measuring.

The second is the Agentic Engine. This is the goal. It involves the transformative redesign of core business workflows. It requires reimagining entire processes, not just helping one person work faster.

Current research confuses the two. It celebrates high rates of Individual Augmentation as if they were strategic wins. They are not. They are often just noise. Without structural readiness and mastery, this "desktop-level" use creates a ceiling. You cannot build a competitive advantage on employees who only know how to ask a chatbot for a summary.

Measuring AI Mastery

To fix the measurement problem, we need a better ruler. We cannot rely on binary metrics that ask if someone is "using AI" or not. We must measure how they are using it.

We developed the 10 Stages of AI Mastery to map this progression. This framework moves beyond simple prompting. It describes the evolution from casual experimentation to the design of autonomous agents. It breaks adoption down into three distinct phases of maturity.

Phase 1: The Illusion of Competence (Stages 1 to 3)

This is where most of the world lives today.

Stage 1 (The Explorer): You ask simple questions. You use AI as a smarter search engine.

Stage 2 (The Experimenter): You try different prompts to see what happens. You treat the tool like a toy or a novelty.

Stage 3 (The Craftsperson): You begin to write structured prompts. You save templates. You get consistent results for individual tasks.

When reports say "82% adoption," they're counting people in this phase. These users are generating text, but they're not generating business value or transforming operations. They're just chatting.

Phase 2: The Missing Middle (Stages 4 to 6)

This is the bridge that most organizations fail to cross.

Stage 4 (The Curator): You stop prompting and start curating. You feed the AI context, style guides, and data to shape the output and realize the AI is only as good as the information you give it.

Stage 5 (The Architect): You design multi-step workflows and chain tasks together. You stop thinking about "chats" and start thinking about "processes".

Stage 6 (The Orchestrator): You scale these workflows to your team and build standards that allow others to repeat your success.

Phase 3: The Transformation Zone (Stages 7 to 10)

This is where the Agentic Engine is built.

Stage 7 (The Automator): You build tools that others use without knowing how they work.

Stage 8 (The Integrator): You connect AI to real systems like CRMs and ERPs. The AI does the work, not just the talking.

Stage 9 (The Agent Developer): You build autonomous agents that observe, decide, and act.

Stage 10 (The AI Leader): You manage a hybrid workforce of humans and agents. You define the strategy for the new operating model.

True adoption doesn't happen until you reach Phase 2, and transformation doesn't happen until Phase 3.

The Reality Behind the 95% Failure Rate

The research reports paint a picture of rapid advancement. Our experience on the ground shows something very different. We consult and train workforces at companies and universities across the globe. We poll employees at conferences and workshops. The data is consistent and alarming.

In our work with hundreds of business leaders over the past three years, 98% of employees are operating below Stage 4.

The "Average Employee" sits at Stage 3 or lower. They're good at crafting a prompt, but they lack the skills to curate context or design a workflow. They hit a ceiling where the tool is helpful but not transformative.

The "Average Executive" is even further behind, often sitting at Stage 2 or less. They're experimenting casually, yet are responsible for making strategic decisions about a technology they do not fully understand.

This creates a dangerous gap. We often see workers who try to jump ahead. They attempt Stage 5 activities, like building a custom GPT or a workflow, without mastering Stage 3 or 4. They skip the fundamentals of context curation.

The result is failure. When you skip Stage 4, you feed the AI poor context. The AI hallucinates. The workflow breaks. The output is unusable. This is why 95% of pilots fail to impact the P&L.[3] Organizations are trying to build Stage 8 integrations with Stage 2 talent. It's not an adoption problem, it's a mastery problem.

The Three-Axis Maturity Model

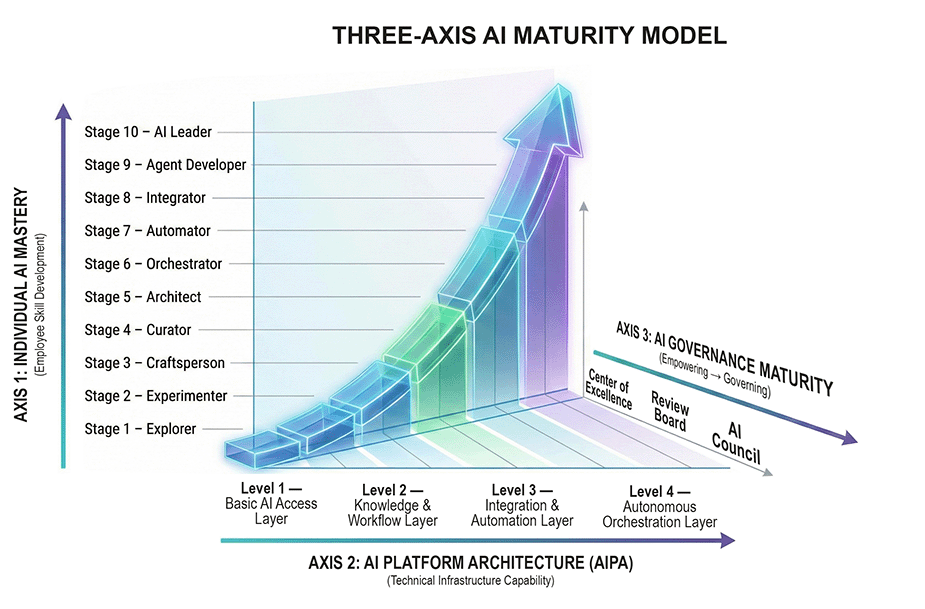

We have established that skill is missing. But skill alone is not enough to fix the problem. You can teach a pilot how to fly, but if you don't give him a plane, he stays on the ground. We use the INGRAIN AI Three-Axis Maturity Model to explain why initiatives fail. To succeed, an organization must mature across three distinct areas at the same time.

Axis 1: Human Capability (Mastery)

This is the 10 Stages framework we just discussed. It measures what your people can actually do.

Axis 2: Platform Architecture (AIPA)

This measures what your technology allows you to do. It ranges from Level 1, which is a basic chat interface, to Level 4, which is fully autonomous orchestration.

Axis 3: Governance

This measures your oversight. It ranges from Tier 1, a helpful Center of Excellence, to Tier 3, a strict AI Council that manages risk.

The "95% failure rate" reported by MIT happens when these axes are out of alignment.[3]

Imagine you have an employee who is a skilled Stage 8 Integrator. They know how to connect AI to your CRM system. They're ready to automate complex tasks. But your organization is stuck on AIPA Level 1. You only provide them with a basic chatbot. You have capped their potential. They cannot do the work that leads to significant impact.

The reverse is also dangerous. Imagine you buy AIPA Level 3 tools. You give your team access to automation platforms and API connectors. But your team is still at Stage 3 and your governance is at Tier 1. They're Craftspeople. They don't understand system architecture. Without an AI Council and rules to govern them, they’ll take unnecessary risks and will build brittle automations that cause more work than they reduce.

You cannot have high autonomy without high governance. You can't have high impact without high capability. When researchers look at adoption, they rarely look at this alignment. They see the tools are purchased. They see the users logging in and declare victory. But because the axes are misaligned, the engine never starts.

The Four Platform Levels of AI Platform Architecture

The AI Platform Architecture (AIPA) is the technical foundation that enables or constrains what your workforce can accomplish. Understanding these levels is critical to aligning your platform investments with your talent development strategy.

AIPA Level 1: Basic AI Access Layer

This is where most organizations start. Level 1 provides access to a single LLM through a secure chat interface. It supports basic prompting, document uploads, and individual productivity tasks. This level is governed by a Center of Excellence (CoE) that focuses on training and acceptable-use guidelines.

Level 1 supports Stages 1 through 3 (Explorer, Experimenter, Craftsperson). At this level, employees can draft emails, summarize documents, and brainstorm ideas, but they cannot build workflows or connect to enterprise systems.

AIPA Level 2: Knowledge and Workflow Layer

Level 2 introduces infrastructure for storing and retrieving domain knowledge. Organizations at this level deploy vector databases, shared prompt libraries, and custom GPT creation capabilities. Teams can build reusable workflows and internal assistants that draw from curated knowledge repositories.

Level 2 supports Stages 4 through 6 (Curator, Architect, Orchestrator). This is the level where organizations begin to see team-scale productivity gains. However, these workflows still operate in isolation from core enterprise systems.

AIPA Level 3: Integration and Automation Layer

Level 3 is the critical turning point. At this level, AI can take action across organizational systems through secure APIs, automation platforms, and data pipelines. Organizations implement API gateways, credential vaulting, and system-level logging.

Level 3 supports Stages 6 through 8 (Orchestrator, Automator, Integrator). This is the first level of operational AI where systems can update CRMs, route tickets, and execute scheduled workflows. At this level, governance must advance from the CoE to a formal AI Review Board that approves automations and monitors data flows.

AIPA Level 4: Autonomous Orchestration Layer

Level 4 is the most sophisticated platform architecture. It supports autonomous agents capable of observing, deciding, and acting across multiple systems with minimal human intervention. This level requires multi-agent orchestration frameworks, real-time monitoring dashboards, kill switches, and full audit trails.

Level 4 supports Stages 9 through 10 (Agent Developer, AI Leader). Organizations at this level have AI systems that operate 24/7, handle complex multi-step processes, and collaborate across departments. Governance at this level requires an AI Council, the highest tier of oversight, focused on enterprise strategy, compliance, and autonomous system risk management.

Empowering Governance is Critical

Governance is not a barrier to AI adoption rates. It's the accelerator that makes safe, scaled deployment possible. The INGRAIN AI Governance Maturity Model defines three tiers that must evolve proportionally with platform capability and human skill.

Tier 1: AI Center of Excellence (CoE)

The CoE is the foundation of AI governance. It focuses on enablement, training, and lightweight oversight. The CoE publishes prompt libraries, establishes acceptable-use guidelines, and supports early-stage experimentation.

The CoE governs AIPA Levels 1 and 2, supporting employees at Stages 1 through 6. Risk at this tier is low because AI actions are contained within user interfaces with no external integrations or autonomous behaviors.

Tier 2: AI Review Board

As organizations move to AIPA Level 3 and begin integrating AI with internal systems, the AI Review Board becomes essential. This body reviews and approves automated workflows, evaluates integration risks, and establishes human-in-the-loop requirements.

The Review Board governs AIPA Level 3, supporting employees at Stages 6 through 8. Risk at this tier is medium because automations can now impact real systems like CRMs, ERPs, and ticketing platforms.

Tier 3: AI Council

The AI Council represents the highest level of governance maturity. This body establishes enterprise-wide AI policy, governs autonomous systems, and ensures regulatory compliance. The Council oversees agent capabilities, manages lifecycle policies, and conducts failure mode analysis.

The AI Council governs AIPA Level 4, supporting employees at Stages 8 through 10. Risk at this tier is high because AI systems operate independently, impact critical business processes, and interact with sensitive data at scale.

The consolidation of strategy and accountability in the C-suite, demonstrated by the presence of Chief AI Officers in 60% of enterprises, highlights the necessity of this top-tier formal governance.[4]

The Executive Blind Spot

This brings us to the leadership dilemma. If you're an executive reading this, we have a hard truth for you. You might be the bottleneck.

We often find that the C-Suite sits at Stage 2. Leaders experiment. They see the potential and approve the budget for enterprise software. They assume that because they bought the tool, the transformation will follow.

This assumption creates what we call the "Trough of Disillusionment". Organizations invest heavily. They roll out the software. Usage spikes. But six months later, the Profit and Loss statement han't changed. The excitement turns into frustration.

The failure happens because leaders prioritize Axis 2 (Platform) while neglecting Axis 1 (Mastery). They buy the car, but they don't teach anyone how to drive.

You cannot buy your way out of this problem. You must build a "Feeder System" of talent. You need a structured plan to move your workforce from Stage 1 to Stage 10. You need Architects (Stage 5) to design the work. You need Orchestrators (Stage 6) to scale it. If you do not build this internal capability, your expensive software will remain a toy.

Research from McKinsey confirms this pattern. High performers are three times more likely than their peers to have strong senior leader ownership and commitment to their AI initiatives.[2] Leadership engagement is not optional. It's the difference between pilots that stall and transformations that deliver EBIT impact.

Recalibrating for an Accurate Assessment

The solution to the adoption crisis is to stop counting logins and start auditing mastery. We must stop asking "How many people are using AI?" and start asking "What stage are they operating at?"

We need to be realistic about the distribution of talent. The goal isn't to force every single employee to Stage 10 overnight. True Stage 10 leadership requires a level of autonomy that enterprise security protocols are not yet fully ready to support.

Instead, successful organizations aim for a specific bell curve distribution.

The Target Mass (Stages 5 to 7): The majority of your workforce should sit here. These are your Architects, Orchestrators, and Automators. These employees use computers for more than 50% of their working hours. When this group reaches Stage 5 or higher, you achieve a critical "Density of Knowledge." This density creates the momentum required to shift the organization from simple Agentic Labor to a true Agentic Engine.

The High Performers (Stages 8 to 9): A smaller group of outliers will advance to become Integrators and Agent Developers. These are the technical leads who connect the workflows designed by the mass workforce into live enterprise systems.

The Reality Check: Currently, most organizations have a bell curve that is skewed heavily to the left. Based on our observations working with hundreds of business leaders over the past three years, the majority are stuck at Stages 1, 2, and 3. They're experimenting, but not architecting. Until you shift that curve to the right, your ROI will remain flat.

You cannot build an Agentic Engine with Stage 3 talent. You need the structural integrity that comes from Stage 5 Architects who understand workflow design. You need the governance of Stage 6 Orchestrators who understand standardization. Once you achieve this density of knowledge, the transformation becomes self-sustaining.

Research validates this approach. McKinsey found that high performers are nearly three times more likely to pursue transformative change by fundamentally redesigning workflows, rather than merely augmenting existing processes.[2] This workflow redesign cannot happen without a critical mass of employees operating at Stage 5 or higher.

How Current Research Overstates Adoption

The problem with current research isn't that the data is wrong. It's that the measurement system is incomplete, leading to inaccurate conclusions that overstate adoption and underestimate the transformation problem.

When Wharton reports that 82% of leaders use Gen AI weekly, they're measuring frequency.[1] When McKinsey reports that 89% of organizations are using AI, they're measuring activity.[2] These metrics capture Individual Augmentation but mistake it for enterprise transformation.

The research accurately shows that people are logging in. It accurately shows that tools are being used. But it fails to measure the structural prerequisites for value capture. It doesn't assess whether employees have the mastery to design workflows or assess whether the platform can support system integration. It doesn't assess whether governance can manage the risk.

This incomplete measurement creates three dangerous blind spots.

Blind Spot 1: Conflating Usage with Capability

Current metrics treat a Stage 2 executive using AI to draft an email the same as a Stage 8 Integrator connecting AI to a CRM. Both show up as "weekly users" in the data. But their organizational impact differs by orders of magnitude.

Blind Spot 2: Ignoring Platform Constraints

Surveys ask "Are you using AI?" without asking "What level of platform architecture do you have access to?" An employee might be a skilled Stage 6 Orchestrator, but if they only have access to AIPA Level 1, their capabilities are artificially capped. The research counts them as "adopters" even though the organization has failed to provide the infrastructure for meaningful impact.

Blind Spot 3: Underestimating Governance Gaps

Current research rarely measures governance maturity. Organizations might have AIPA Level 3 platforms and Stage 7 talent, but without a Review Board to manage approvals and monitor risk, automations fail or create compliance violations. The research sees the investment and declares success, missing the governance vacuum that prevents safe scaling.

The result is a massive overestimation of true adoption. The 82% usage rate tells us that people are experimenting. It doesn't tell us they are transforming. Our ground-level data, gathered from working with hundreds of business leaders over the past three years, shows that 98% of employees haven't crossed into Phase 2 mastery. They're stuck below Stage 4, unable to curate context or architect workflows.

This is why MIT reports that 95% of generative AI initiatives stall.[3] The initiatives fail not because the technology is inadequate, but because the measurement system lulled leaders into believing they had adoption when they only had activity.

The True Path to AI Transformation

Organizations that want to move beyond the mirage need a transformation plan that addresses all three axes simultaneously. Here is the roadmap.

Step 1: Audit Your Current State

Assess where your workforce sits on the 10 Stages of AI Mastery. Don't rely on usage metrics. Interview employees. Test their ability to curate context, design workflows, and think systematically about AI tasks. Map the distribution of your talent across the stages.

Next, evaluate your AIPA level. Do you provide only basic chat access (Level 1), or have you invested in knowledge repositories (Level 2), system integrations (Level 3), or autonomous orchestration (Level 4)?

Finally, assess your governance maturity. Do you have a Center of Excellence? A Review Board? An AI Council? Is your governance tier aligned with your platform capability and employee skill?

Step 2: Identify Alignment Gaps

Look for mismatches between the three axes. If you have Stage 7 employees but only AIPA Level 1 infrastructure, you have a platform gap. If you have AIPA Level 3 capabilities but no Review Board, you have a governance gap. If you have Level 3 infrastructure but Stage 2 employees, you have a talent gap.

These gaps are the reason your pilots are stalling. Fix them before you invest in more tools or launch more initiatives.

Step 3: Build the Feeder System

Focus on moving your "Target Mass" of employees from Stage 3 to Stage 5. This requires structured training that goes beyond prompt engineering. Employees need to learn context curation (Stage 4) and workflow architecture (Stage 5).

This isn't a one-time workshop. It's a sustained capability-building program. Organizations that succeed treat AI mastery like any other critical skill. They build learning paths, provide coaching, and measure progress against the 10 Stages framework.

Step 4: Scale Infrastructure and Governance Together

As your workforce matures, upgrade your platform architecture. When you reach a critical mass of Stage 5 employees, invest in AIPA Level 3 to enable system integration. At the same time, establish your AI Review Board to govern these new capabilities.

Do not make the mistake of buying Level 3 infrastructure before your workforce is ready. And don't wait until after a governance incident to formalize oversight. Platform and governance must scale together, paced by the growth of human capability.

Step 5: Measure What Matters

Replace usage metrics with maturity metrics. Track the distribution of employees across the 10 Stages. Monitor the percentage of your workforce that has reached Stage 5 or higher. Measure the number of workflows (not just prompts) that have been deployed at scale.

Track business outcomes, not activity. How many processes have been redesigned? What percentage of transactions have been automated? What is the measured impact on your P&L?

These metrics will give you a real picture of adoption. They will reveal whether you are building an Agentic Engine or just accumulating activity.

Next Steps

The headlines about AI adoption rates are painting a misleading picture. They celebrate frequency while ignoring proficiency. This creates a mirage where activity looks like value, but the business outcomes never materialize.

The research from Wharton, McKinsey, Harvard Business Impact, and other institutions isn't wrong. But it is incomplete. By measuring usage without measuring mastery, by tracking activity without tracking architecture, and by ignoring governance entirely, these studies overstate AI adoption rates and underestimate the size of the transformation problem.

You have an opportunity to correct this course. Use the first half of 2026 to realign your strategy. Stop buying more tools for people who don't know how to use them. Stop celebrating login metrics when your P&L has not changed. Stop skipping the foundational work of building mastery, platform capability, and governance in synchronization.

Focus on moving your "Target Mass" of employees to Stage 5. Establish the governance to keep them safe and the platform architecture to let them build. If you align these three axes, you'll stop chasing the mirage and start seeing the results.

The choice is yours. You can continue measuring what is easy (usage frequency) or you can measure what matters (structural maturity). One path leads to activity. The other leads to transformation.

Take the next step: Get a copy of INGRAIN AI today and learn how to implement AI adoption in your organization.

References

[1] Wharton Human-AI Research and GBK Collective. "Accountable Acceleration: Gen AI Fast-Tracks Into the Enterprise." 2025.

[2] McKinsey & Company. "The state of AI in 2025: Agents, innovation, and transformation." 2025.

[3] MIT NANDA Initiative. "MIT report: 95% of generative AI pilots at companies are failing." 2025.

[4] Harvard Business Impact. "Fast, Fluid, and Future-Focused: Building the Collective Intelligence of Humans and Machines." 2025.

[5] IBM Research. "From Benchmarks to Business Impact: Deploying IBM Generalist Agent in Enterprise Production." 2025.