The more AI takes on, the more we yield our judgment, discernment, and critical thinking to it. Those skills are atrophying. But the capacity AI creates is exactly what we need to rebuild them.

Nate Boaz recently published an article called "From Muscle to Mind to Meaning" that really resonated with me. His argument is one of the clearest I've read on what AI actually means for the workforce and largely echoes what I've been saying to our clients and on podcasts over the past two years.

Boaz states, "If cognition is cheap, then judgment becomes priceless. When analysis is abundant, then wisdom becomes the scarce resource. When a machine can generate thousands of potential solutions in an instant, the human who knows which one matters is the one who makes all the difference."

He's right.

He goes on to say that we've spent centuries naming technological eras after tools instead of people, and we're doing it again. The Stone Age, the Bronze Age, the Industrial Revolution, the Information Age, and now the "Era of AI." Every time we name an age after what we built instead of what we became, we miss the actual transformation happening underneath.

Boaz makes that point brilliantly, and I think it deserves expansion.

They're trying to control a fire with gasoline.

In Louisiana, sugar cane farmers have been burning their fields for over 200 years. It's called prescribed burning, and it's the discipline that makes the entire industry work.

The fire clears 50 to 67% of the dry leafy material from the stalks. That makes harvest faster, reduces truck trips to the mill, cuts processing costs, and shortens the harvest season by up to 10%. The ash fertilizes the soil for the next crop. Without the burn, the industry would spend an extra $24 million a year and yields would drop 10 to 15% the following season.

The fire isn't the problem; it's the tool.

What makes it safe is the discipline around it. Farmers have to be trained and certified. They cut firebreaks, watch the wind, stage water and equipment, snd burn in controlled sections. A certified operator knows exactly when the fire is doing its job and exactly when it's about to jump the line.

AI is the same kind of fire. Built on purpose. Genuinely useful. Clears work that used to take weeks. Creates the conditions for better work to grow in its place. But it has a threshold. Past that threshold, it stops being a tool and becomes a wildfire that takes out the barn, the neighbor's pasture, and the house down the road.

Here's what makes this moment different: the people who built the fire are the ones telling us it's about to jump the firebreak. And instead of training more operators, cutting more breaks, or staging more water, they're pouring on gasoline.

Anthropic CEO Dario Amodei has publicly predicted AI will destroy half of all entry-level white-collar jobs and push unemployment as high as 20 %, the highest since the Great Depression. Within five years. His own company's March 2026 labor market report named the possibility of "a Great Recession for white-collar workers" and built what amounts to an early-warning system for AI-driven job destruction.

Former Google ethicist Tristan Harris warned AI could trigger a global jobs market collapse by 2027. He called it "NAFTA 2.0," except instead of cheap manufacturing labor overseas, it's "a country of geniuses in a data center" doing cognitive work for less than minimum wage.

These are predictions from inside the industry; from the people who lit the match.

And yet Anthropic more than doubled its annualized revenue from $9 billion to nearly $20 billion between December 2025 and March 2026. It raised $30 billion in a single funding round. OpenAI, Google, and xAI are racing to pour tens of billions more into infrastructure, capability, and speed.

Nobody is cutting firebreaks, certifying operators, or pausing to let the workforce, security, or society catch up.

It's a field full of cane farmers who know exactly how fire behaves, watching the wind pick up, reaching for the gas can instead of the water truck.

This is why the question Boaz raised matters so much right now. The fire is moving regardless. But the only variable any of us control is whether our people are trained to work inside the burn and produce something better because of it, or whether they get caught in it.

The skills are already eroding.

Here's what concerns me most. The research is starting to confirm something I've been seeing in the field for over a year: the fire is already jumping the line, and most organizations don't see it yet.

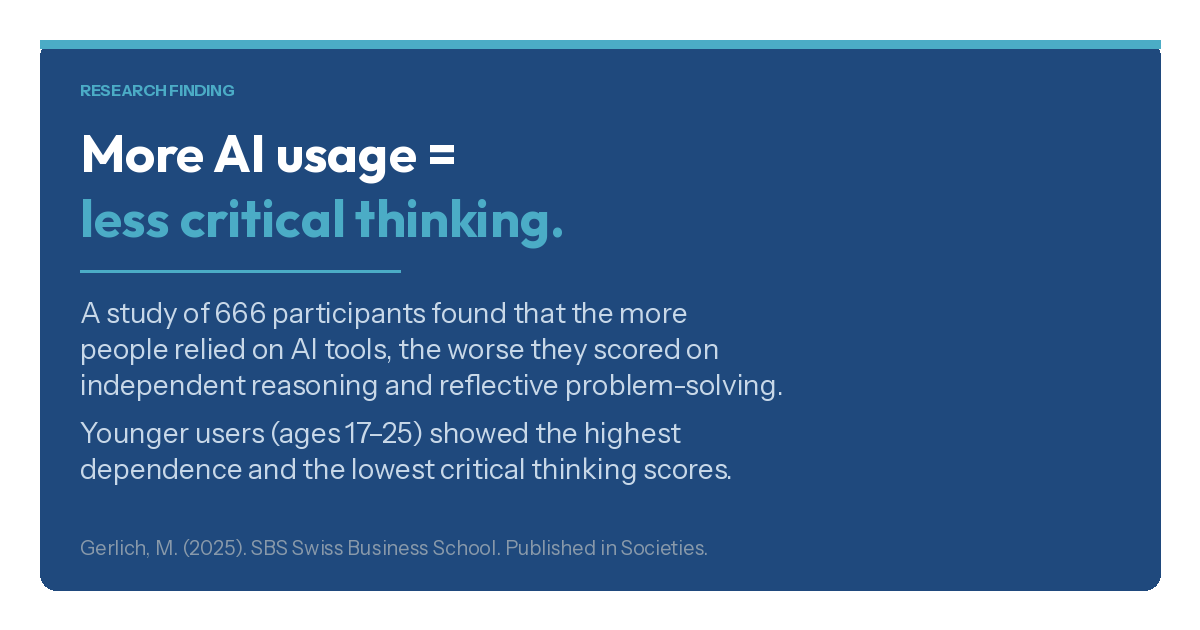

A 2025 study out of SBS Swiss Business School found a significant negative correlation between frequent AI tool usage and critical thinking abilities. The more people relied on AI, the worse they scored on independent reasoning and reflective problem-solving.

The mechanism is something researchers call cognitive offloading: when you delegate thinking to a machine often enough, the muscle that does the thinking weakens. Younger users, ages 17 to 25, showed the highest dependence and the lowest critical thinking scores.

Microsoft's own research, presented at CHI 2025, found the same pattern among knowledge workers. GenAI reduces the perceived effort of critical thinking. People feel like they're still thinking critically, but they're actually doing less of it. Especially on routine tasks, the ones that make up the bulk of most workdays.

And a RAND study published earlier this month reported that 67% of students now believe heavy AI use harms their critical thinking skills. That number climbed more than 10 points in just ten months.

This is the danger I described to a colleague recently: if we yield our own intelligence to AI, if we start treating its output as 100% right instead of 80-90% right, the very skills that make us valuable (discernment, judgment, taste, critical thinking, ideation) don't just sit idle. They atrophy. They disappear. And once they're gone, rebuilding them is exponentially harder than maintaining them would have been.

This is the paradox at the center of the AI shift. The tasks AI is best at executing are not the tasks that make humans valuable. And the more we lean on AI to do the work it can do, the more we abandon the work only we can do.

Speed isn't the gift. The raised ceiling is.

Most organizations right now treat AI as a speed and efficiency tool. Get more done in less time. Clear the backlog. Reduce cycle times.

But here's where the thinking goes sideways. Once an organization realizes it can produce the same output with fewer people, the next logical step, at least on a spreadsheet, is to reduce headcount. Personnel is usually the largest line item on a P&L. Cutting it looks like an obvious win.

And if one company does it, competitors feel pressure to do the same. At scale, across industries, this logic produces two things simultaneously: an inferior product and a massive economic disruption. Companies reduce the very workforce that produces quality, serves customers, and buys products. They shrink the market they're trying to sell into.

The data supports this concern. Cornell researchers published a study in Science in late 2025 showing that AI-assisted scientists published up to 50% more papers after adopting AI writing tools. But the quality declined. Volume went up. Value went down. That is the trend becoming increasingly evident across every industry right now, whether the leaders running those industries see it or not.

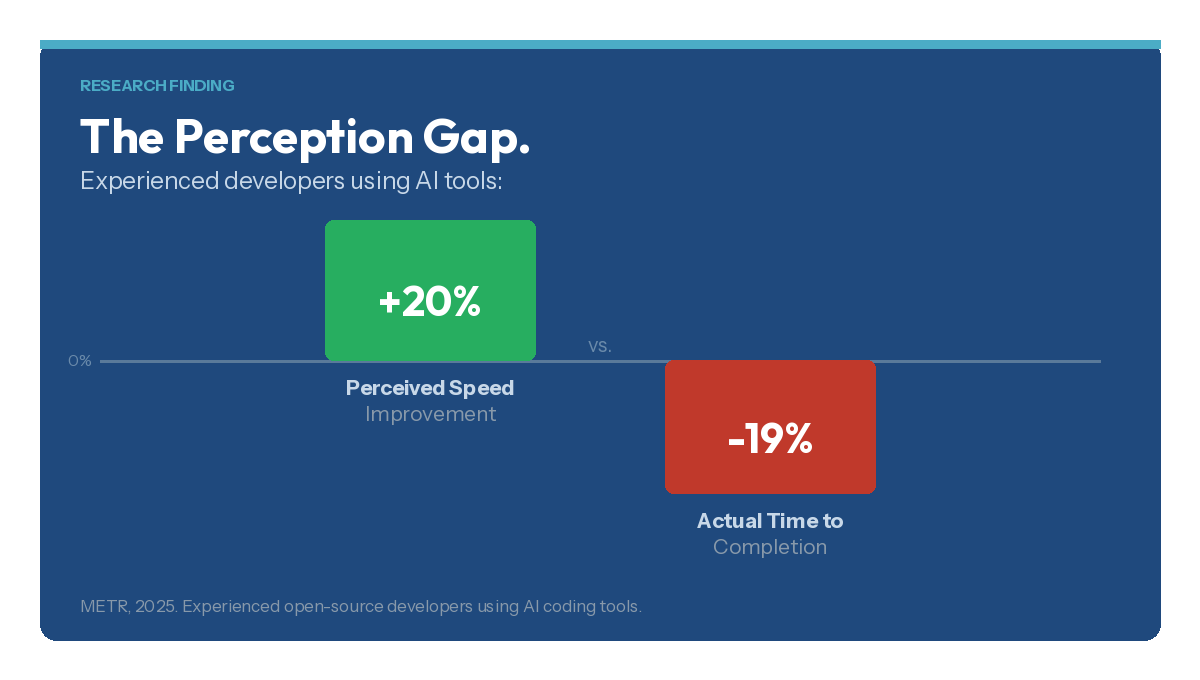

A separate study found that experienced software developers using AI tools actually took 19% longer to complete tasks, despite believing they were 20% faster. The distance between "I feel productive" and "I am producing something excellent" is growing.

The actual gift AI gives us is the raised ceiling, not speed. When AI compresses the production timeline and handles 80-90% of a deliverable, the question shouldn't be "how many people can we cut?" The question should be "what can our new standard of excellence look like now that the timeline to deliver it has compressed?" And the capacity AI creates, the hours and mental bandwidth it frees up, is exactly the capacity we need to rebuild the judgment, discernment, and critical thinking it's eroding.

The economic case for elevation over replacement.

The numbers on replacement are brutal. The average cost of replacing a single employee runs around $35,700 when you factor in recruiting, onboarding, lost productivity, and institutional knowledge that walks out the door. For senior roles, it can reach 200% of annual salary. U.S. companies collectively spent nearly $900 billion replacing employees who voluntarily quit in a single year.

Meanwhile, reskilling existing employees saves 70-92% compared to hiring externally. Companies that emphasize employee development produce 218% higher income per employee. And according to the Guild/Lightcast Talent Resilience Index, reskilling the existing workforce could generate $8.5 trillion in additional revenue by 2030. That revenue evaporates if companies keep defaulting to replacement.

The retention data tells the same story from the employee's perspective. 94% of employees say they would stay longer at a company that invests in their development. 42% of voluntary departures are preventable. The number one reason people leave isn't compensation. It's stagnation. No growth, no path forward, no sense that their contribution matters.

This is where Boaz's vision meets operational reality. He describes a future where humans do work that machines can't: building, creating, healing, fixing, protecting, judging.

That future doesn't materialize on its own. It has to be built.

The organizations that invest in teaching their people to think more critically, exercise better judgment, and produce work that's measurably better than what AI generates alone will retain their best talent, differentiate their products, and grow. The ones that chase headcount reduction will spend more, lose more institutional knowledge, and produce increasingly mediocre output for a shrinking market.

What elevation actually means.

Boaz suggests we ask the question that matters most: "Am I doing work that only humans can do?" He suggests that if your answer is "no," that is "your invitation to do more meaningful work." But in a typical work environment, most people will not see it as an invitation, and very few would even know what to do if they did recognize it as such.

That's where we, as employers, have to be more intentional about how we adopt, deploy, and scale AI in the organization. Discernment, taste, critical thinking, and value expansion are the skills that must be deliberately rebuilt in the workplace as execution work shifts to AI.

We use the word "elevation" to describe the practice of taking AI output and making it measurably better through domain expertise, critical thinking, and the specific strengths each person brings to their work.

It has two dimensions. The first is quality judgment: catching what AI got wrong, missed, or oversimplified. The second is value expansion: seeing what the output could become if someone with real expertise pushed it further. These skills are two sides of the same practiced reflex.

The person who can look at an AI-generated deliverable and instantly assess whether it needs light polish or significant rework, that person is operating at a fundamentally higher level than someone who treats every output as the finished product. That assessment, the ability to discern where the human contribution is needed and calibrate the response, is a trainable skill. Not an innate talent or a personality trait. A skill that can be taught, practiced, cultivated, and measured.

This is something we've built directly into the INGRAIN AI Transformation Roadmap, a structured 10-phase process for enterprise-wide AI adoption.

Elevation isn't a separate initiative bolted on at the end. It's woven into how we train people to think and work with AI from the beginning. Because if you don't ever tell someone their job is to make AI output better and improve outcomes through their own personal expertise and domain knowledge, they're inclined to believe that AI is the new value standard, and they are merely disposable pawns in a revised value chain.

The specifics of how we train, measure, and govern elevation across an organization, that's the work we do with our clients. But the principle is available to everyone reading this: AI gets you 80-90% of the way there. The last 10-20% is yours, and is what separates acceptable from excellent. It's what makes a product worth buying, a service worth recommending, a company worth working for.

The window is closing.

Only 1% of organizations have reached full AI maturity, according to McKinsey's 2025 data. That means 99% are still figuring this out. The window for establishing how your organization works with AI, whether your culture rewards speed or excellence, whether your people maintain their critical thinking or let it erode, is open right now. It won't stay open forever.

Boaz is right that the future belongs to people who do what machines can't. But that future requires:

- Intentional investment

- Training that goes beyond "here's how to use the tool" and into "here's how to think on top of what the tool produces"

- Governance that holds the standard: AI-generated output represents a starting point, not a finished product

- Leaders who understand that the highest-ROI move isn't cutting the workforce, but making the workforce irreplaceable

A properly trained workforce that knows how to use AI in the most efficient manner possible can increase efficiency. But it can also raise the standard of excellence to a level that truly differentiates your organization and adds measurable value to the clients and customers you serve.

We can have the gains in productivity that AI promises. And we can have gains in economic activity by producing better products, retaining more employees, and giving people the ability to use their talents and abilities at a higher level than was ever possible before. Those two outcomes are connected. But only if we are intentional about how we build the bridge between them.

References

Nate Boaz's Article

- Boaz, Nate. "From Muscle to Mind to Meaning." LinkedIn, 2026. https://www.linkedin.com/pulse/from-muscle-mind-meaning-nate-boaz-tfiie/

AI Company Warnings and Revenue Data

- Thompson, Derek. "What Is Anthropic Thinking?" Interview with Jack Clark, Anthropic co-founder. March 27, 2026. https://www.derekthompson.org/p/what-is-anthropic-thinking

- Massenkoff, Maxim and Peter McCrory. "Labor Market Impacts of AI: A New Measure and Early Evidence." Anthropic, March 2026. https://www.anthropic.com/research/economic-index-primitives

- "Anthropic Launches AI Job Destruction Detector." Axios, March 5, 2026. https://www.axios.com/2026/03/05/anthropic-ai-jobs-claude

- "AI Could Trigger a Global Jobs Market Collapse by 2027 If Left Unchecked, Former Google Ethicist Warns." Fortune, February 10, 2026. https://fortune.com/2026/02/10/ai-taking-jobs-report-tristan-harris-google-ethicist-agi-technology/

- "Anthropic Nears $20 Billion Revenue Run Rate." Bloomberg, March 3, 2026. https://www.bloomberg.com/news/articles/2026-03-03/anthropic-nears-20-billion-revenue-run-rate-amid-pentagon-feud

- "Anthropic Raises $30 Billion Series G Funding." Anthropic, February 2026. https://www.anthropic.com/news/anthropic-raises-30-billion-series-g-funding-380-billion-post-money-valuation

Critical Thinking and Cognitive Atrophy Research

- Gerlich, Michael, SBS Swiss Business School. "AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking." Societies, January 3, 2025. https://www.mdpi.com/2075-4698/15/1/6

- Lee, Hao-Ping et al. "The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects from a Survey of Knowledge Workers." Microsoft Research, CHI 2025. https://dl.acm.org/doi/full/10.1145/3706598.3713778

- Schwartz, Heather L. and Melissa Kay Diliberti. "More Students Use AI for Homework, and More Believe It Harms Critical Thinking." RAND Corporation, 2026. https://www.rand.org/pubs/research_reports/RRA4742-1.html

AI Productivity Paradox Research

- Kusumegi, Keigo et al. "Scientific Production in the Era of Large Language Models." Science, December 2025. https://www.sciencedaily.com/releases/2025/12/251224032347.htm

- "Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity." METR, 2025. Referenced in https://toolfountain.com/ai-productivity-statistics/

Employee Retention and Reskilling Economics

- "28 Employee Retention Statistics for 2026." Paycor. https://www.paycor.com/resource-center/articles/employee-retention-statistics/

- "2026 Employee Retention Strategies." HR Cloud. https://www.hrcloud.com/blog/employee-retention-strategies

- "Upskilling and Reskilling: Economic Impact in 2025." Pierpoint. https://pierpoint.com/blog/upskilling-and-reskilling-economic-impact/

- Guild/Lightcast Talent Resilience Index, referenced in "Using AI to Replace Jobs? Layoffs Will Cost You." Inc., October 2025. https://www.inc.com/bruce-crumley/using-ai-to-replace-jobs-layoffs-will-cost-you-do-this-instead/91252881

- LinkedIn Workplace Learning Report 2025. https://learning.linkedin.com/resources/workplace-learning-report

AI Maturity Data

- McKinsey Global Survey on AI, 2025. Referenced in https://toolfountain.com/ai-productivity-statistics/

John Munsell is CEO of Bizzuka and creator of INGRAIN AI, including the AI Strategy Canvas®, Scalable Prompt Engineering™, and the INGRAIN AI™ Transformation Roadmap™. Bizzuka works with organizations of 50 to 50,000 employees to build AI-fluent workforces that produce their own tools and govern their own AI operations.