Rolling out AI training across an entire organization requires 4 things most companies skip: a shared framework, a common vocabulary, a staged rollout, and a governance policy established before problems surface. Without these in place first, training becomes an individual activity. Some people finish it, most don't, and organizational capability stays exactly where it was before you spent the money. Each step below covers what to put in place, why the order matters, and what most organizations get wrong before anyone opens a course module.

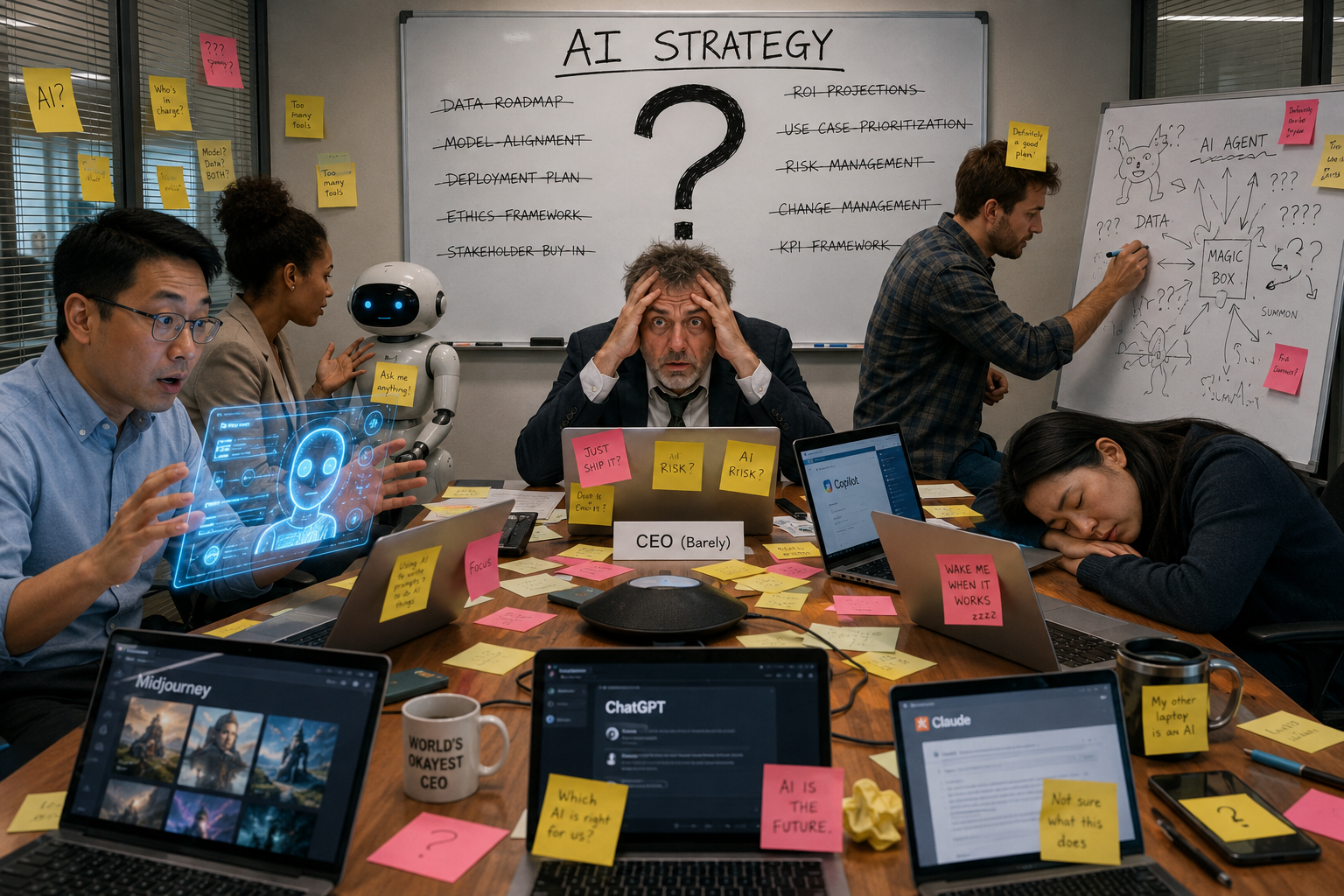

Someone in your organization is using AI every day, saving hours, producing better work, and quietly pulling ahead. Two floors up, the operations team tried it once, got a mediocre result, and went back to doing things manually.

Finance hasn't touched it. IT is experimenting with three different tools nobody else knows about. And your executive team is nodding along in board meetings about AI strategy while privately unsure what any of it means for day-to-day work.

This training problem is costing you more than you realize.

When AI adoption is left to chance, you don't get an AI-enabled organization. You get a collection of individuals at wildly different skill levels, using different tools, producing inconsistent results, with no way to share what's working. The person who figures out a powerful way to use AI keeps that knowledge in their head. It doesn't reach the person next to them, survive turnover, or become something your whole organization can build on.

Most business owners who set out to fix this make the same mistake: they send everyone a course link and call it a training program. Some people finish it, but most don't. And almost nobody changes how they work.

Rolling out AI training that sticks across an entire organization requires something most companies skip entirely: a shared foundation, common framework, and starting point everyone can stand on before they start building in different directions.

What Fragmented AI Training Costs You

You might not see it on a spreadsheet, but the cost of fragmented AI adoption is real and it compounds every week you let it continue.

Start with the time your team wastes. When there's no shared approach to AI, every person who wants to use it effectively has to figure it out on their own. They watch YouTube videos, take a free course, experiment, fail, and eventually land on something that works for them. That process takes months. Multiply it by every person in your organization and you're looking at an enormous amount of time spent rediscovering things someone else on your team already knows.

Then there's the duplication. Someone in sales builds a prompt that generates strong client proposals. A week later, someone in marketing builds something nearly identical because they had no idea the first one existed. Both people invested time creating something from scratch that could have been shared, improved, and standardized. Across a 50-person organization, that kind of duplication doesn't just waste hours. It creates a ceiling on what AI can do for you.

Inconsistency is just as damaging. When everyone uses AI differently, outputs vary wildly. One team member produces polished, accurate work. Another produces content that needs heavy editing before it's usable. A third is uploading sensitive client data into a free tool without realizing the privacy implications. You can't build a reliable, scalable operation on that kind of variation.

Competitive exposure rounds out the picture. Formally trained employees are 2.7x more proficient than self-taught peers. While your team is still experimenting independently, organizations that invested in structured training are already operating at a higher level. They're producing more, faster, with less rework. Every month without a coordinated training approach is a month your competitors are widening that distance.

The organizations that feel this most acutely are the ones where AI use is spreading. On the surface, it looks like progress. People are using the tools, outputs are coming in, and activity is visible. Underneath, the capability is random, non-transferable, and entirely dependent on individual motivation. When that person leaves, everything they figured out leaves with them.

Fragmented AI adoption doesn't just slow you down. It makes everything you're trying to build with AI harder to sustain.

Why Standard Training Programs Fail

Most business owners do the right thing when they recognize the problem. They find a course, buy licenses, send out a link, and wait for things to improve. Six months later, usage data is poor, managers report no behavior change, and the frustration is real. The investment didn't produce what anyone hoped for.

The reason standard training programs fail isn't that employees don't try. Most people who take a generic AI course learn something. A few new tricks, a better sense of what the tools can do, maybe a prompt or two they'll use for a week before reverting to old habits. Individual knowledge improves slightly. Organizational capability stays exactly where it was.

What's missing is structure. Not structure in the sense of a rigid curriculum or a compliance checklist. Structure in the sense of a shared framework that gives everyone a common language, a common approach, and a common standard for what good AI usage looks like inside your organization.

Without that foundation, training is just information. And information alone doesn't change how people work. Think about the last time your organization adopted a new tool. The people who got good at it fast weren't the ones who read the most documentation. Those who adapted quickly had clear expectations, real examples, and a system they could follow and repeat.

Generic AI courses are built for individuals. A course like that teaches someone how to use a tool for themselves, in their own context, at their own pace. What it doesn't do is give a 200-person organization a shared way of working. Common vocabulary doesn't emerge from it. Governance standards that protect your data don't come with it. Reusable systems that let one person's breakthrough become everyone's asset aren't part of the package.

The result is an organization full of people who completed training and changed very little. Some got better on their own, while others checked the box and moved on. The capability ceiling stayed right where it was before you spent the money.

Closing that ceiling requires something different: a program built not just to educate individuals but to align an entire organization around a shared way of using AI, safely, consistently, and at scale.

Want to see what a structured foundation looks like before you build one?

How to Build a Foundation Everyone Can Stand On

Before you roll out any training program, you need to make a decision that most organizations skip entirely. You need to decide what "good" looks like before anyone opens a single course module.

That means defining three things up front: what tools your organization will use, how people will use them, and what standards everyone is expected to meet. Without those three things in place, you're just rolling out permission to experiment, and hoping the experiments converge somewhere useful.

Start with a shared framework, not a tool list.

The instinct most leaders have is to pick the tools first. Choose ChatGPT or Claude, buy the licenses, and then figure out training. That order feels logical, but it puts the cart before the horse. Tools change, pricing changes, and platforms evolve. What doesn't change is the underlying skill of knowing how to communicate clearly with AI, structure your requests, and evaluate what comes back.

Start by giving your team a framework for thinking about AI before they ever open a chat window. That framework should answer 4 questions for every person in your organization:

- What is AI good at?

- What are the risks of using it carelessly?

- How do I structure a request to get a consistent result?

- How do I know if the output is trustworthy?

When everyone starts from those four questions, the tools become interchangeable, skills transfer, and new hires onboard faster. And when a better tool comes along, your team can adapt without starting over.

Establish a common language before training begins.

One of the most underestimated barriers to organization-wide AI adoption is vocabulary. When one person says "prompt," they might mean a single sentence typed into a chat window. Someone else means a structured, multi-part instruction set built around specific containers and variables. Both people think they're talking about the same thing. Neither is getting consistent results or understands why.

Before training rolls out, align your organization on a shared vocabulary. Define what a prompt is, what a good output looks like, and the difference between using AI to assist a task and using AI to complete one. These definitions don't need to be complicated. A single page of agreed-upon terms, shared across your organization before training begins, eliminates weeks of confusion later.

Roll out in stages, not all at once.

The temptation with a company-wide initiative is to launch everything simultaneously. In practice, that approach produces a spike in activity followed by a sharp drop-off. People get overwhelmed, deprioritize it, and the momentum dies before it builds.

A staged rollout works significantly better. Start with a pilot group, ideally 10 to 20 people across different departments who are motivated and willing to provide feedback. Run them through the training, watch what sticks and what doesn't, and use what you learn to sharpen the rollout for everyone else. Pilot groups surface the questions your broader team will have, before those questions become obstacles at scale.

Once the pilot is complete, roll out by department rather than company-wide. Give each group a clear start date, expectation, and a point of contact who can answer questions. Progress at a pace the organization can absorb, not the pace that looks fastest on a project timeline.

Build a shared prompt library from day one.

One of the highest-value steps you can take during a training rollout is creating a centralized place where prompts live. Not a folder on a shared drive, but a structured database that’s organized by use case, searchable, and accessible to everyone.

When someone figures out a prompt that saves them 45 minutes on a recurring task, that prompt should be available to every other person in the organization who does similar work. Organizations that centralize their prompt libraries see a 40 to 50 percent reduction in the time spent refining AI outputs, because people aren't rebuilding from scratch what already exists.

Start simple: a shared Notion database with four fields, prompt name, use case, full prompt text, and the AI tool it was built for, is enough to get started. The goal is to create a place where knowledge accumulates instead of evaporating when someone closes a chat window.

Set governance expectations before problems surface.

AI governance sounds like a legal department concern. In practice, it's a frontline management issue. Every day your employees use AI without clear guidelines is a day someone is making judgment calls about what's safe to share, what's appropriate to publish, and what tools are acceptable to use. Most of those judgment calls are reasonable. Some aren't. And the ones that aren't can be costly.

Before training rolls out, establish 3 basic governance expectations:

- First, which tools are approved for use and which aren't.

- Second, what kinds of information should never be entered into an AI platform without proper privacy settings enabled.

- Third, who reviews AI-generated content before it reaches clients, customers, or the public.

These don't need to be lengthy policies. A single page of clear, practical guidelines is more likely to be followed than a 20-page document nobody reads. The goal is to make sure every person in your organization knows the rules before they need them, not after something goes wrong.

When you decided to read this post, something was already nagging at you. Maybe it was the meeting where two departments described their AI processes and clearly had no idea what the other was doing. Perhaps it was the realization that your best AI user is one resignation letter away from taking everything they know out the door. Or maybe it was the quiet suspicion that your organization is spending money on tools nobody fully knows how to use.

That feeling is worth paying attention to. It's telling you something real.

Rolling out AI training across an entire organization isn't complicated, but it does require intention.

The organizations that get this right see:

- Capability compound

- Prompts that get added to the shared library make the next person faster.

- Departments that adopt common standards raise the floor for everyone else.

- New hires who onboard into a structured AI environment contribute sooner and cost less to bring up to speed.

The AI SkillsBuilder® Essentials is a structured entry point for organizations that are done with informal AI adoption and ready to build something that compounds. Built around the 10 Stages of AI Mastery, the AI Strategy Canvas®, and Scalable Prompt Engineering™, it gives your entire organization a common language, framework, and standard from day one. Register today.